Humour, comics, tech, law, software, reviews, essays, articles and HOWTOs intermingled with random philosophy now and then

Filed under:

Site management by

Hari

Posted on Mon, Oct 19, 2020 at 20:18 IST (last updated: Mon, Oct 19, 2020 @ 20:20 IST)

I will not be updating this blog any more. Head over to harishankar.net where I'm restarting with a highly original and innovative title Hari's Corner 2

This site (harishankar.org) will remain as it is, as an archive, without any further content updates.

I'm hoping to become more active in blogging and I hope you'll join me there.

Filed under:

Artwork/Portraits/Caricatures by

Hari

Posted on Thu, Sep 24, 2020 at 20:41 IST (last updated: Thu, Sep 24, 2020 @ 20:41 IST)

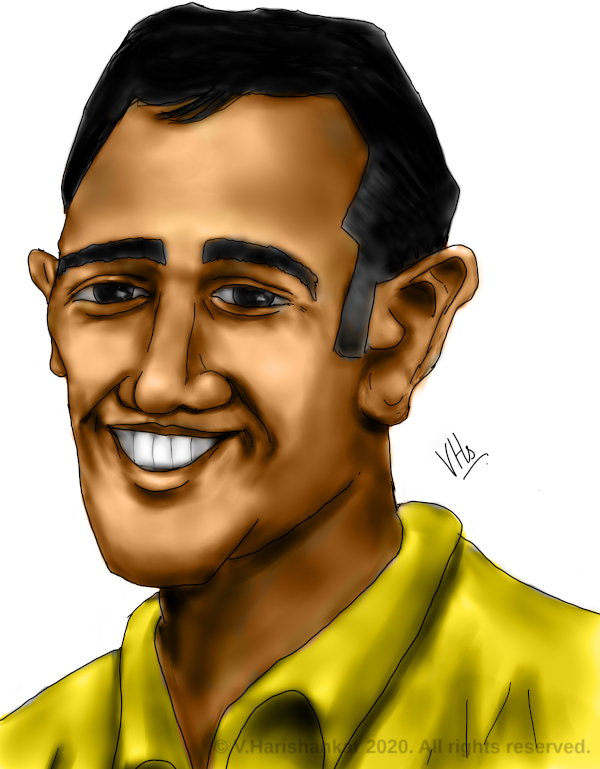

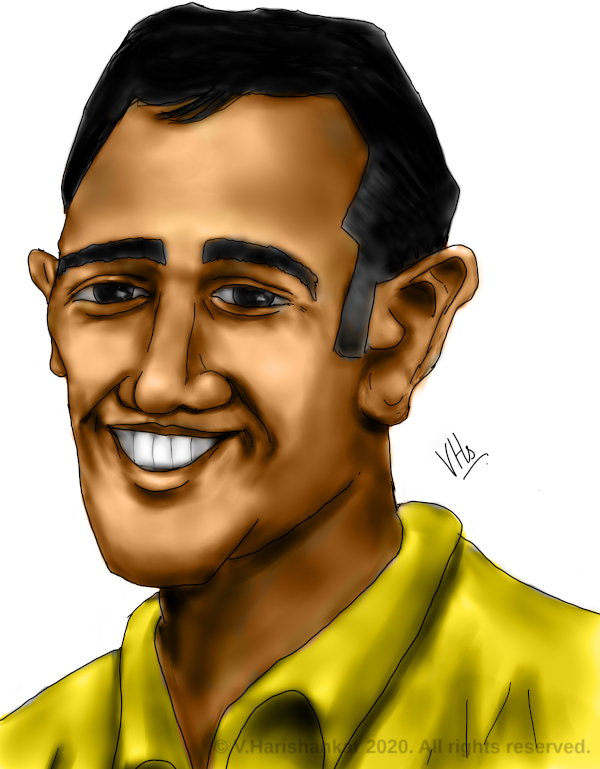

Caricature of cricketer and former Indian captain Mahendra Singh Dhoni - painted in Krita with my XP-Pen Artist 10S.

Filed under:

Artwork/Landscapes/Other by

Hari

Posted on Wed, Sep 23, 2020 at 15:32 IST (last updated: Wed, Sep 23, 2020 @ 15:32 IST)

Cartoonish rock monster. Inspired from the golem from an old RPG video game, Ultima VIII - Pagan.

Filed under:

Artwork/Landscapes/Other by

Hari

Posted on Tue, Sep 22, 2020 at 16:22 IST (last updated: Tue, Sep 22, 2020 @ 16:23 IST)

Study of smoke from incense stick and Sambrani (benzoin) cup. Painted using Krita with my XP-Pen Artist 10S.

Filed under:

Artwork/Landscapes/Other by

Hari

Posted on Mon, Sep 21, 2020 at 21:28 IST (last updated: Mon, Sep 21, 2020 @ 21:28 IST)

Something different from my usual work. Still life - pomegranate bowl. Painted in Krita with my XP-Pen Artist 10S.

Filed under:

Artwork/Landscapes/Other by

Hari

Posted on Sun, Sep 20, 2020 at 17:22 IST (last updated: Sun, Sep 20, 2020 @ 17:22 IST)

Serene rural landscape scenery - painted in Krita with my XP-Pen Artist 10S.